Management cluster setup for bare metal

Creating a bootstrap cluster

In this guide, we will focus on creating a bootstrap cluster which is basically a local management cluster created using kind.

To create a bootstrap cluster, you can use the following command:

After creating the bootstrap cluster, it is also required to have some variables exported and the name of the variables that needs to be exported can be known by running the following command:

clusterctl needs a specific infrastructure provider version when rendering provider-specific templates. If the version is not already known in your local clusterctl state, auto-detection can fail.

You can find available CAPH versions on the GitHub tags page.

These variables are used during the deployment of Hetzner infrastructure provider in the cluster.

Installing the Hetzner provider can be done using the following command:

Preparing Hetzner Robot

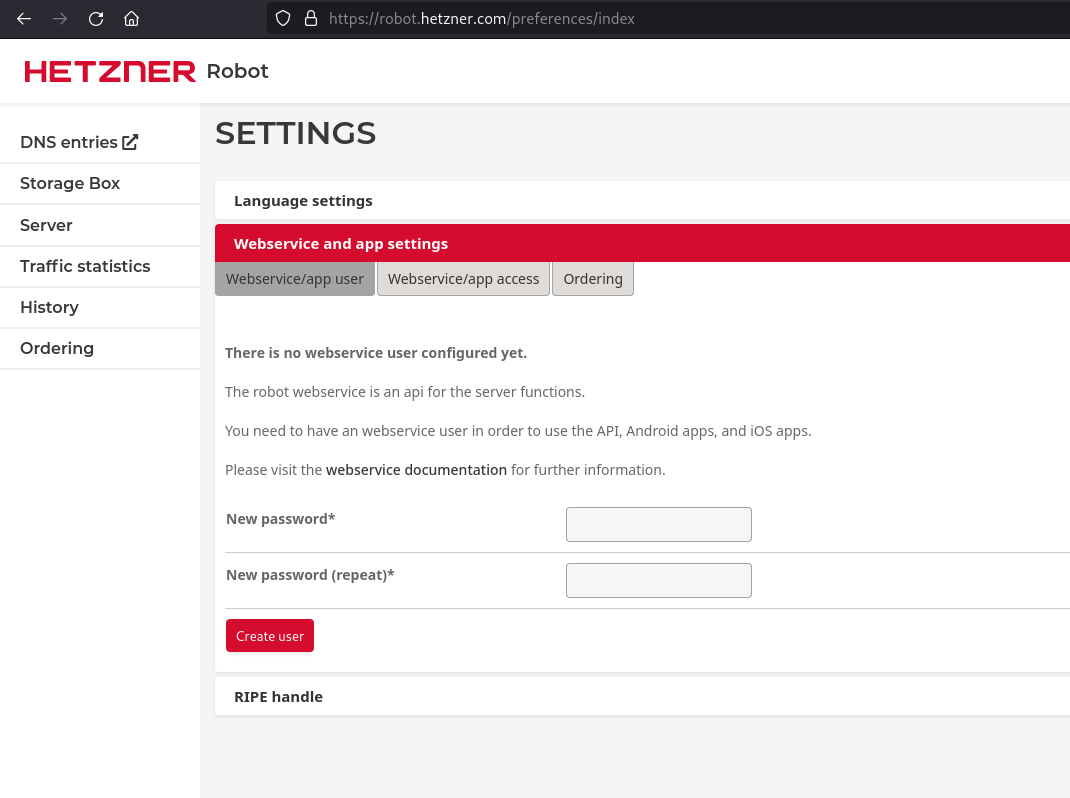

- Create a new web service user. Here, you can define a password and copy your user name.

- Generate an SSH key. You can either upload it via Hetzner Robot UI or just rely on the controller to upload a key that it does not find in the robot API. You have to store the public and private key together with the SSH key's name in a secret that the controller reads.

For this tutorial, we will let the controller upload keys to hetzner robot.

Creating new user in Robot

To create new user in Robot, click on the Create User button in the Hetzner Robot console. Once you create the new user, a user ID will be provided to you via email from Hetzner Robot. The password will be the same that you used while creating the user.

This is a required for following the next step.

Creating and verify ssh-key in hcloud

First you need to create a ssh-key locally and you can ssh-keygen command for creation.

Above command will create a public and private key in your ~/.ssh directory.

You can use the public key ~/.ssh/caph.pub and upload it to your hcloud project. Go to your project and under Security -> SSH Keys click on Add SSH key and add your public key there and in the Name of ssh key you'll use the name my-caph-ssh-key .

There is also a helper CLI called hcloud that can be used for the purpose of uploading the SSH key.

In the above step, the name of the ssh-key that is recognized by hcloud is my-caph-ssh-key . This is important because we will reference the name of the ssh-key later.

This is an important step because the same ssh key is used to access the servers. Make sure you are using the correct ssh key name.

The my-caph-ssh-key is the name of the ssh key that we have created above. It is because the generated manifest references my-caph-ssh-key as the ssh key name.

If you want to use some other name then you can modify it accordingly.

Create Secrets In Management Cluster (Hcloud + Robot)

In order for the provider integration hetzner to communicate with the Hetzner API (HCloud API + Robot API), we need to create secrets with the access data. The secret must be in the same namespace as the other CRs.

We create two secrets named hetzner for Hetzner Cloud and Robot API access and robot-ssh for provisioning bare metal servers via SSH. The hetzner secret contains API token for hcloud token. It also contains username and password that is used to interact with robot API. robot-ssh secret contains the public-key, private-key and name of the ssh-key used for baremetal servers.

HCLOUD_TOKEN: The project where your cluster will be placed. You have to get a token from your HCloud Project.HETZNER_ROBOT_USER: The User you have defined in Robot under settings/web.HETZNER_ROBOT_PASSWORD: The Robot Password you have set in Robot under settings/web.SSH_KEY_NAME: The name of the SSH key you want to use.HETZNER_SSH_PUB_PATH: The Path to your generated Public SSH Key.HETZNER_SSH_PRIV_PATH: The Path to your generated Private SSH Key. This is needed because CAPH uses this key to provision the node in Hetzner Dedicated.

sshkey-name (from SSH_KEY_NAME) should must match the name that is present in Hetzner otherwise the controller will not know how to reach the machine. You can upload ssh-keys via the Robot UI (Server / Key Management).

Patch the created secrets so that they get automatically moved to the target cluster later. The following command helps you do that:

The secret name and the tokens can also be customized in the cluster template.

Creating Host Object In Management Cluster

For using baremetal servers as nodes, you need to create a HetznerBareMetalHost object for each bare metal server that you bought and specify its server ID in the specs. Below is a sample manifest for HetznerBareMetalHost object.

If you already know the WWN of the storage device you want to choose for booting, specify it in the rootDeviceHints of the object. If not, you can proceed. During the provisioning process, the controller will fetch information about all available storage devices and store it in the status of the object.

For example, let's consider a HetznerBareMetalHost object without specify it's WWN.

In the above server, we have not specified the WWN of the server and we have applied it in the cluster.

After a while, you will see that there is an error in provisioning of HetznerBareMetalHost object that you just applied above. The error will look the following:

After you see the error, get the YAML output of the HetznerBareMetalHost object and then you will find the list of storage devices and their wwn in the status of the HetznerBareMetalHost resource.

In the output above, we can see that on this baremetal servers we have two disk with their respective Wwn . We can also verify it by making an ssh connection to the rescue system and executing the following command:

Since, we are now confirmed about wwn of the two disks, we can use either of them. We will use kubectl edit and update the following information in the HetznerBareMetalHost object.

Defining rootDeviceHints on your baremetal server is important otherwise the baremetal server will not be able join the cluster.

If you've more than one disk then it's recommended to use smaller disk for OS installation so that we can retain the data in between provisioning of machine.

We will apply this file in the cluster and the provisioning of the machine will be successful.

To summarize, if you don't know the WWN of your server then there are two ways to find it out:

- Create the HetznerBareMetalHost without WWN and wait for the controller to fetch all information about the available storage devices. Afterwards, look at status of

HetznerBareMetalHostby runningkubectl get hetznerbaremetalhost <name-of-hetzner-baremetalhost> -o yamlin your management cluster. There you will findhardwareDetailsof all of your bare metal hosts, in which you can see a list of all the relevant storage devices as well as their properties. You can copy+paste the WWN of your desired storage device into therootDeviceHintsof yourHetznerBareMetalHostobjects. - SSH into the rescue system of the server and use

lsblk --nodeps --output name,type,wwn

There might be cases where you've more than one disk.

In the above case, you can use any of the four disks available to you on a baremetal server.

- Previous

- Hetzner bare metal